How to Perform a Comprehensive SEO audit

There are about 3 MILLION results when searching for anything related to “seo audit”. Sifting through these results can leave you feeling like you’ve taken crazy pills.

I’ve seen it a million times. Marketers put their blinders on and get hyper-focused on 1 aspect of SEO which may only account for 5% of their ranking performance. It’s important to understand SEO ranking factors to prioritize the biggest opportunities for improvement. Note – if you’re a local business with a brick and mortar storefront and want to focus on showing up in maps and the 3 pack, you’ll want to read the blog on local ranking factors.

This blog will explain the factors in these SEO categories: accessibility, indexability, on page and off page ranking factors.

Accessibility

The most important part of SEO – is your site accessible? Let’s find out!

Robots.txt: If you haven’t heard of robots.txt, now you have. Robots.txt is a basic file used to tell search engines if they can index your site. You can manually check for this by inspecting the source code – but I prefer to use Google Search Console. With Google Search Console you are able to submit your XML sitemap to Google and the system will let you know how many pages were supplied vs how many were indexed. You will get warnings if something doesn’t look right and suggestions if anything is detected. Here’s a helpful video on submitting a sitemap to Google Search Console.

If you’re using WordPress, you can download a plugin like Yoast to generate an XML sitemap and it will even submit it to Search Console if connected. There are many plugins and integrations for most CMS’s if you don’t use WordPress

Website Architecture: Sounds complicated right? It’s really not. Site architecture is how well your website is organized. How many clicks does it take users to get to a specific article or page? The fewer clicks – the better. Search engines like websites with accessible content, this means cross-linking content, having a site search, well-organized navigation, and related posts. There are many tools out there which tell you your “crawl depth”. You can use SEM Rush or Screaming Frog to determine this metric, along with many of the metrics in your audit.

Website Page Speed Performance

It’s no surprise that people are impatient when it comes to page load time. I typically see a direct correlation with the bounce rate. Slow loading pages typically have a higher bounce rate – meaning, if your user lands on a webpage and it takes too long to load (typically over 2-4 seconds) they will immediately leave and seek information elsewhere.

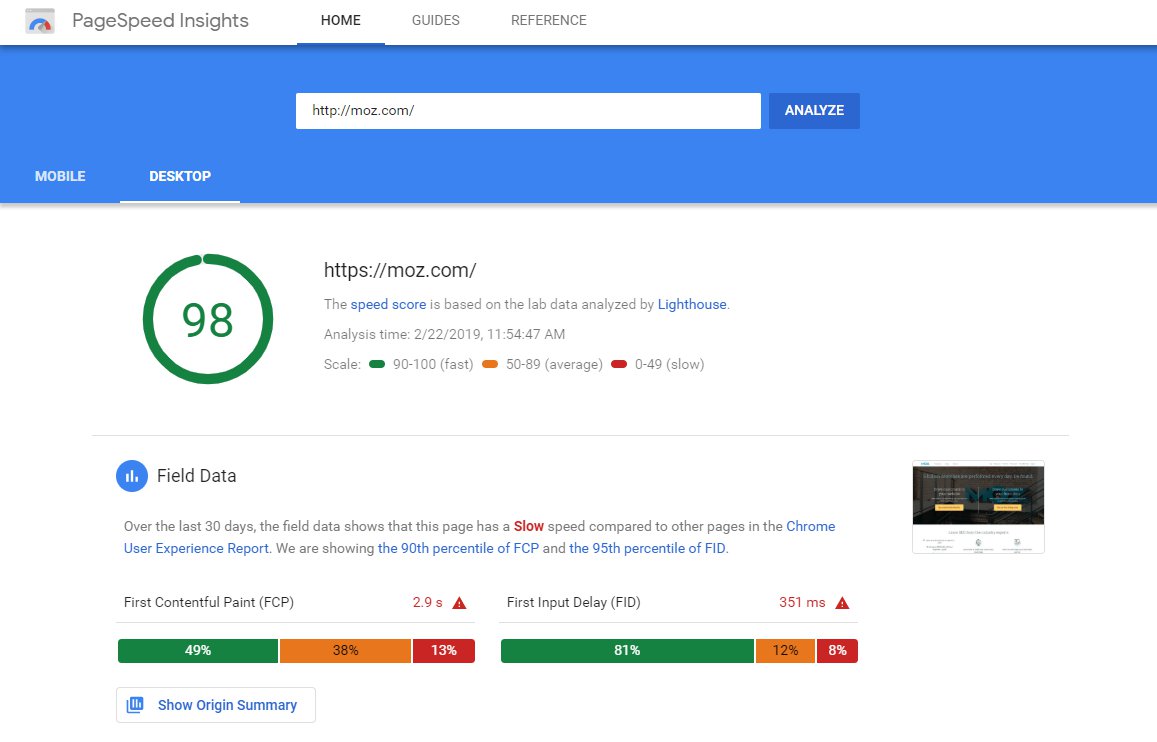

To test for page speed we can use several tools. First, I look at load time for mobile and desktop by using Googles Page Speed insights:

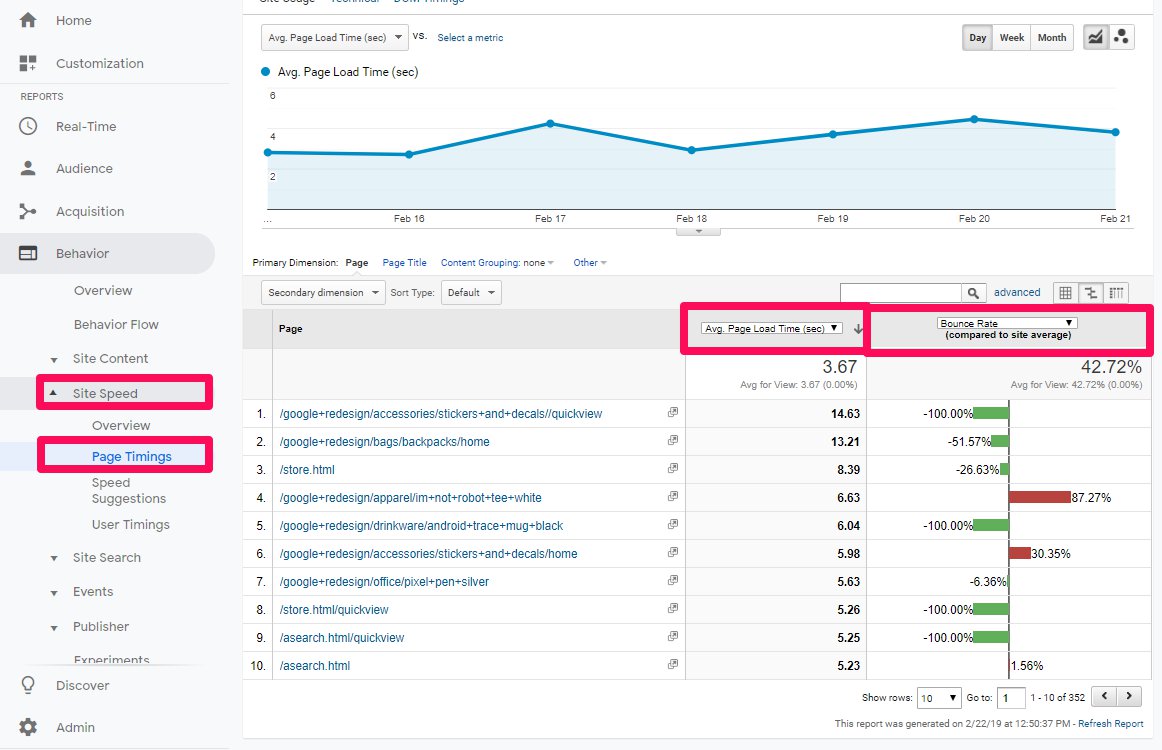

If you have access to the websites Google Analytics, you can use this to get an accurate understanding of how fast the pages of a website are loading and cross-reference metrics like location, bounce rate, time on page etc. Each page of your website will have a different load time, you can use Google Analytics to gauge how well a certain page is loading vs the websites average load time. This way, you can have a clear understanding of which pages need the most attention. Google Analytics will also give speed suggestions.

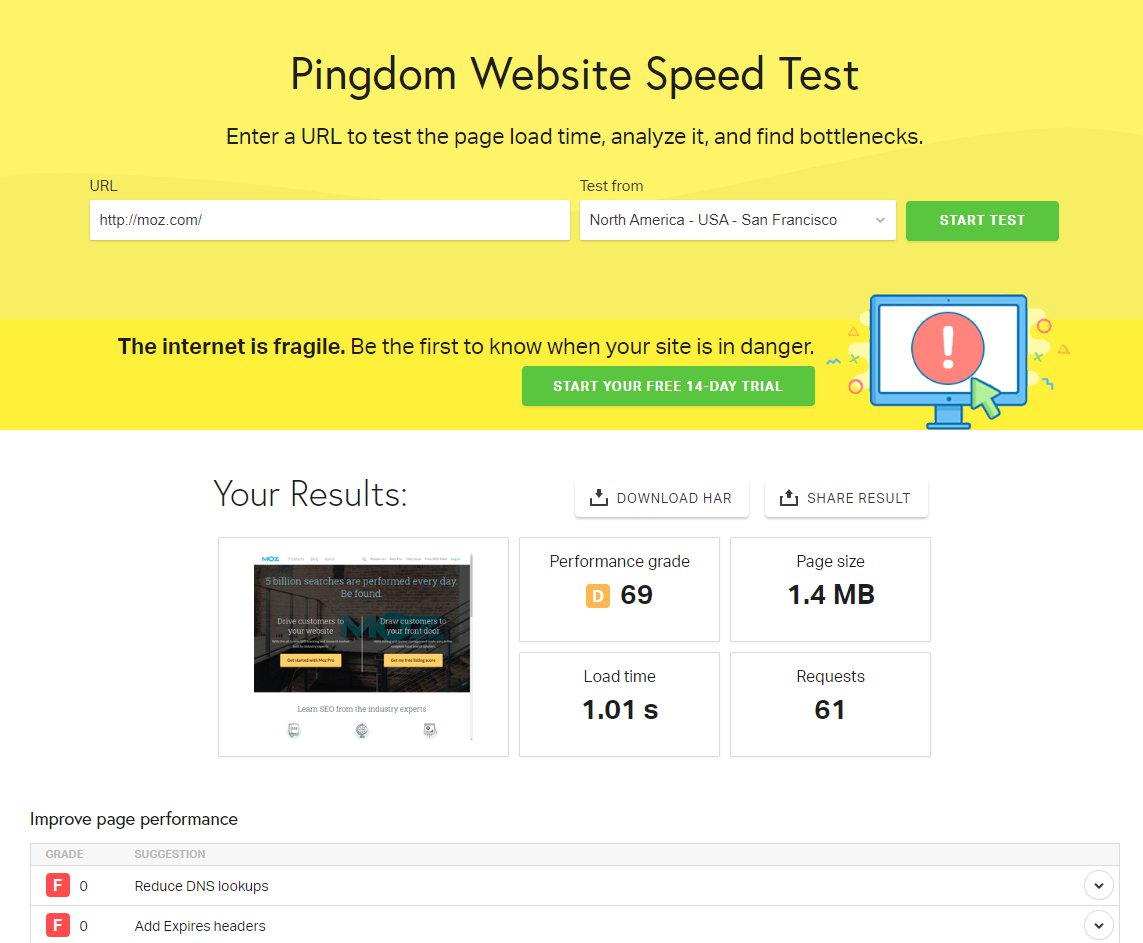

Lastly, Pingdom is a great tool for page speed insights if you want to test traffic speed in a new location you’re planning to target: Pingdom

Read my blog on improving site speed and load time performance.

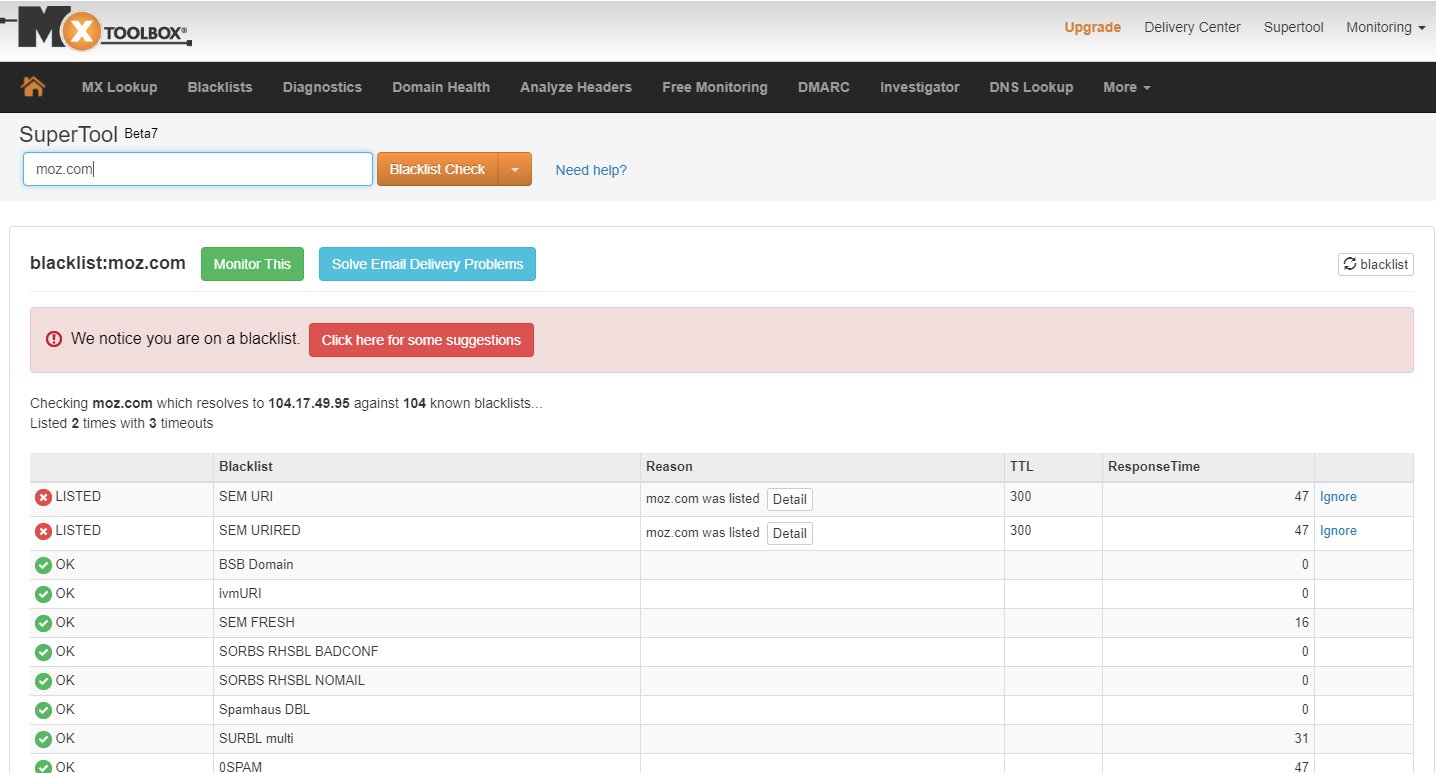

Blacklists and SEO

How do search engines and email servers know if your site is trustworthy or not? There are a lot of factors taken into consideration, but the most granular of all is the blacklist. If hackers can exploit vulnerabilities in your websites they can install malware to send out spam from your website. Before you know it, people are getting emails which look like they are from your website and marking them as spam. Soon thereafter your domain or IP will be put on a blacklist to show search engines and email servers that your domain is not to be trusted. Many times the hosting provider will flag your site and take it down to prevent more spam from going out. Needless to say, being on a blacklist is bad for all the reasons listed above.

MX Toolbox is a phenomenal tool which can do many things – including blacklist scans to see whether or not your website is listed on any of the blacklists out there. https://mxtoolbox.com/blacklists.aspx

If your site is on a blacklist, it should be a top priority to get it taken down. Typically this requires work from a developer to clean out the malware, relaunch the site and submit to each of the blacklists to request it be removed from the list.

On Page SEO Factors

At this point, we’ve identified site-wide opportunities for improvement which should be completed as a priority before this step.

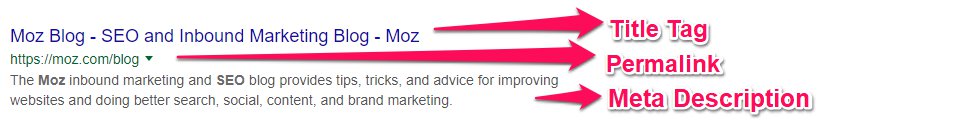

Title tag, permalinks, meta description

These factors are important because they not only tell search engines what your page is about, they are also what users see in SERPs, or Search Engine Result Pages. Search engines take into consideration a websites click to impression ratio which is why it’s important to optimize these options to get clicks. Search engines will lower keyword rankings if a website is showing up in search engines but no one is clicking. This tells search engines that a website is not relevant for the keywords it has been ranked for.

Impression to clicks is an important engagement factor, but it doesn’t stop there. Search engines look at user engagement once a link is clicked – so if a pages bounce rate is particularly high for a keyword, it will lose rankings. This is why it’s important that the title tag, permalink and meta descriptions are an accurate representation of the content found on the page.

Schema markup/structured data/rich snippets

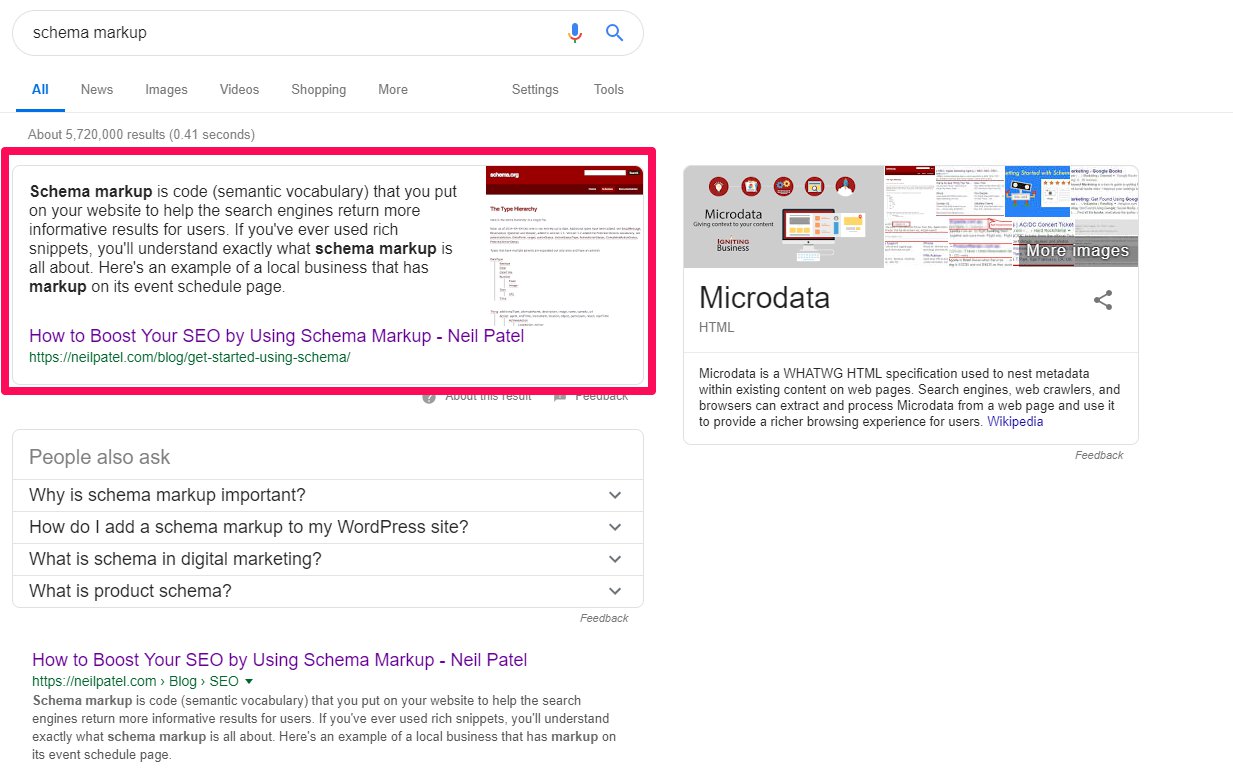

Schema markup is a fancy way to tag content with rich snippets to enrich search engine results. Ever see search results which look like this:

Example of how blog posts optimized with schema display in search results

This is an example of Schema Markup ^ Search engines are now showing Rich Snippets in keyword results as illustrated in the example above. This link is from Neil Patels website.

Schema Markup is important because it tells search engines what your web pages are about. Search engines took the time and money to implement Schema to give their users a better user experience – and they prioritize websites who optimize their content to take advantage of the feature. Additionally, results which show up as a Rich Snippet are more likely to be clicked on, which greatly improves the click to impression ratio.

Here is a list of all the current Rich Snippets websites can implement if they have any of the following content types:

- Creative works: CreativeWork, Book, Movie, MusicRecording, Recipe, TVSeries …

- Embedded non-text objects: AudioObject, ImageObject, VideoObject

- Event

- Health and medical types: notes on the health and medical types under MedicalEntity.

- Organization

- Person

- Place, LocalBusiness, Restaurant …

- Product, Offer, AggregateOffer

- Review, AggregateRating

- Action

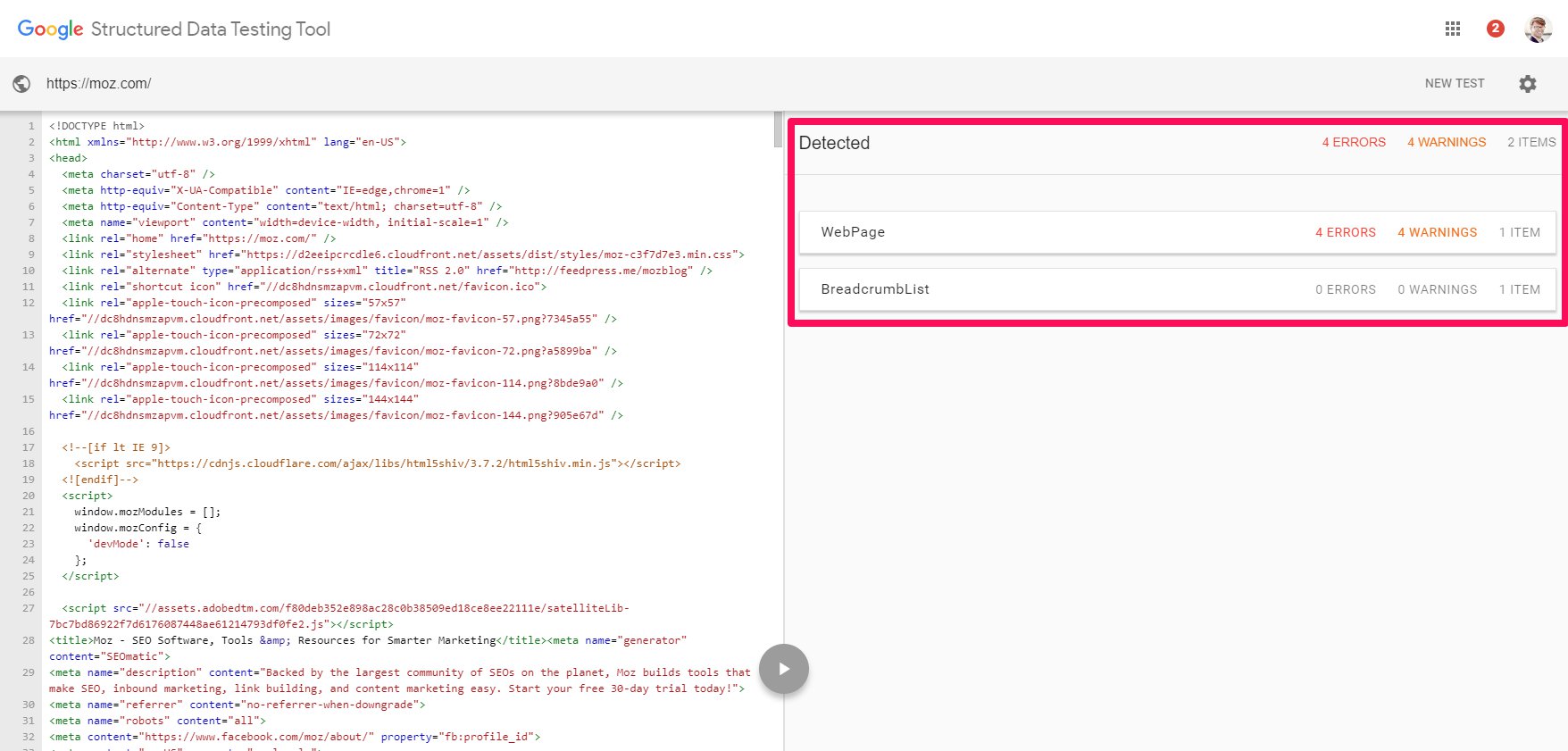

Now that we understand what Schema is, and why it’s important, we can test our website for Rich Snippets by using the Google Structured Data Tool. This tool will tell you if a webpage uses structured data.

Content quantity

Content quantity

This should be a no-brainer. Websites without keyword relevant content will not get ranked for said keywords. If your website needs to target a keyword like “Best waffles in Philadelphia” you better believe you should have a blog post or landing page optimized for this keyword.

Content quality

Long over are the days of creating keyword crammed landing pages. Search engines have been wise to this for a long time which is why they take great care to track each pages user engagement. Search engines use several metrics to quantify good content by way of engagement metrics.

Here are some quick tips on auditing a page for content quality:

- Does the page contain at least 300 words?

- Does the content bring value? The bounce rate, time on page and click-through rate will help you better understand if it does bring value.

- Does the target keyword show up in your permalink, title tag, and first paragraph of your content? YOAST can help you better determine this.

- Is the keyword Stuffed in the content? Don’t try to game the system! Google is smart and they don’t like spammy keyword stuffing tactics.

- Is the content grammatically correct or contain spelling errors? Your content should be professionally written without any glaring mistakes.

- Are search engines able to crawl the content? Infographics and animation are great, but an alt tag isn’t going to give Search Engines an accurate representation of all the content found within.

Here are the top engagement metrics you should include in your audit (you will need access to Google Analytics for this):

- Bounce Rate: The percent of users who land on a webpage and leave without clicking to another page.

- Click Through Rate: The percentage of users who click through to another page after landing on a site.

- Time on Page: How long users stay on your website. Once a user is inactive for more than 30 minutes their session is over.

- Return Visits: Are visitors coming back to your website once they leave?

- Calls and Directions: If a website is promoting a local business, search engines take into consideration how many calls and direction requests the local listing is receiving. This can be generated using Google My Business.

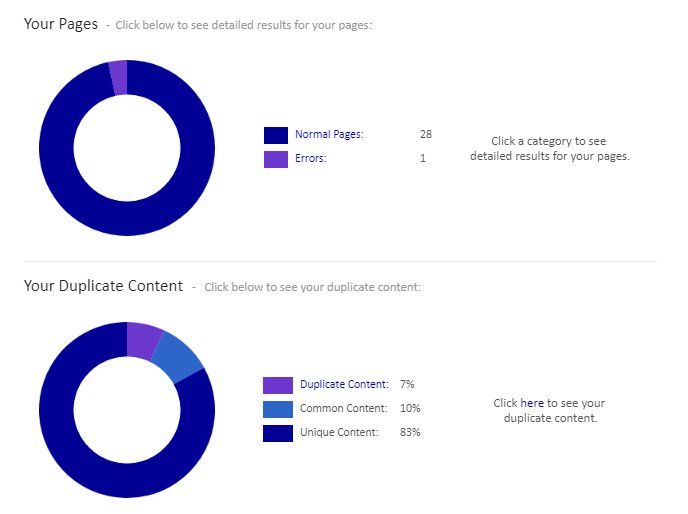

Additionally, search engines reward websites with original content and penalize those with duplicate content – on and off the site. Content copied and pasted from Wikipedia will be penalized. Also, content which is reused in more than 1 page on your site will be counted as duplicate content. Surprisingly enough, I’ve encountered many websites with duplicate content generated by black hat SEO agencies, so be careful not to fall into this trap. Audit your site for duplicate content.

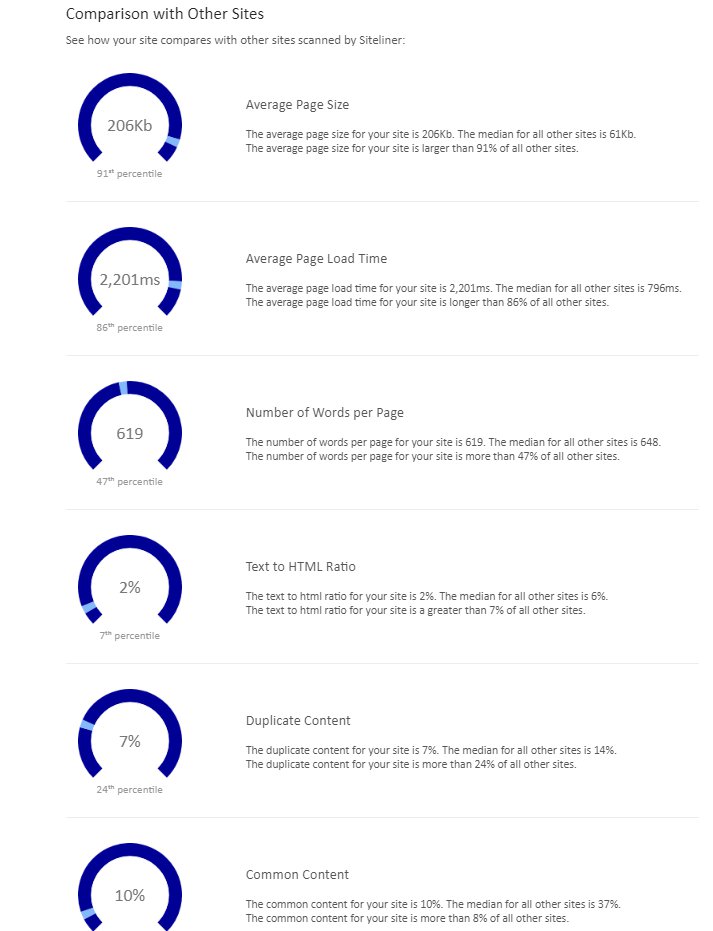

Check out http://www.siteliner.com to analyze your site for duplicate content. It will help you identify duplicate content and show how you stack up against the competition.

It also has some other nifty tools to help you with your audit as well:

There are many ways to deal with duplicate content. You can either redirect a page, add a no index tag, use Search Console to tell Google not to index a page or ad a canonical tag to tell search engines which page is more important to index. If you’d like to learn more about duplicate content, check out this awesome blog by MOZ >

TIP: If you’re working on a new version of your site in a development environment, make sure you have a “DO NOT FOLLOW” tag so your site isn’t indexed multiple times. Also, make sure your DNS records are set up properly. I’ve seen many instances where both www.site.com and site.com are both live and getting hit for duplicate content. I’ve also seen the same scenario with SSL certificates where both HTTP and HTTP versions of the site are indexed. If you don’t know what that means, see if your site will load with and without the www – if one doesn’t redirect to the other, you’ve got a problem.

Outbound links and cross-linking content

Just as a good research paper cites authoritative sources so should your website. Believe it or not, search engines want to see that you’re linking out to relative resources or articles. Additionally, it’s important to cite other pages on your website to give them authority and promote click-throughs. You can manually review each page for these criteria, or automate the process by using an SEO software like SEM Rush, or Raven Tools, Ahrefs or Screaming Frog.

Criteria Search Engines take into consideration when it comes to your links:

- Are they pointing to trustworthy sources?

- Are they links relevant to the content?

- Do the links use relevant anchor text?

- Is the website linking to any broken sites? You can use a site like Link Checker to find out.

SSL and SEO

Search Engines like secure websites and websites with SSL certificates. An SSL certificate will encrypt user information and is most relevant for websites that collect sensitive information like credit card info. Use this tool to check to see if your site has an SSL installed properly

Alt tags

Optimizing images with alt tags is one of the oldest tricks in the SEO book. If a website’s images don’t have alt tags, you’ll want to recommend adding them.

These are some of the most important on-site SEO factors you should take into consideration when auditing a website. There are many tools which can quickly and effectively aid in an audit for on-site SEO ranking factors. I prefer SEM Rush but there are many great tools out there.

Here are a host of other factors software options like SEM Rush can help identify and audit:

- Incoming internal links – backlinks from other websites to your site

- Page crawl depth – how many clicks it takes to get to a specific page on your site

- HTTP status codes – errors, broken links etc.

- Security certificate issues – do you have an SSL installed? Is it installed properly?

- Server issues

- Website architecture issues

- Issues with hreflang values ( used for multilingual websites )

- Hreflang conflicts within page source code

- Issues with incorrect hreflang links

- Pages with potentially missing hreflang attributes

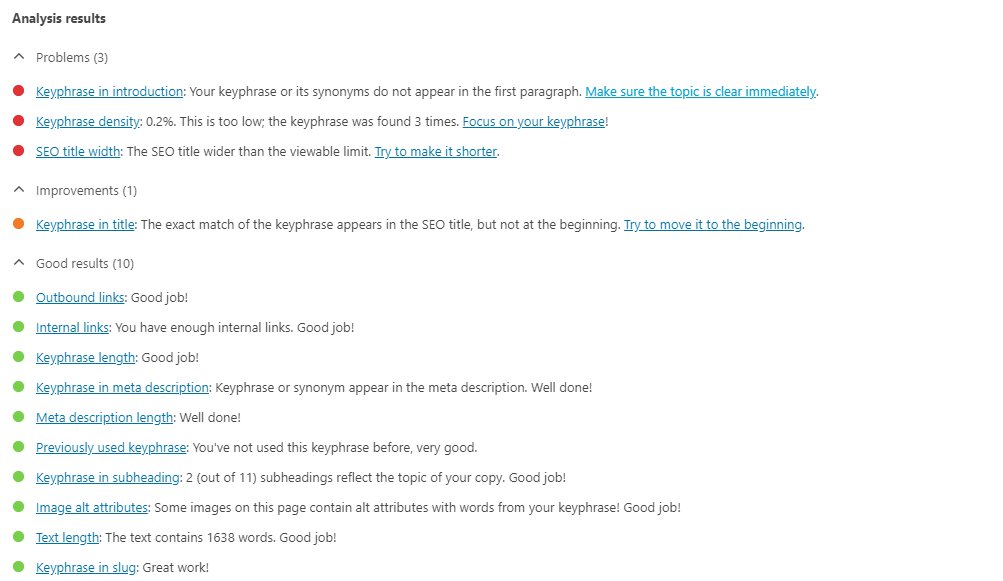

If you’re using WordPress, I highly recommend YOAST which will help audit many of the on-site SEO factors from the admin panel. This is only really relevant to those using WordPress and those who have access to the WordPress admin panel. YOAST can help with many of your SEO tasks but offers little in the way of reporting features.

Ex of YOAST page analysis:

Off-Site SEO Ranking Factors

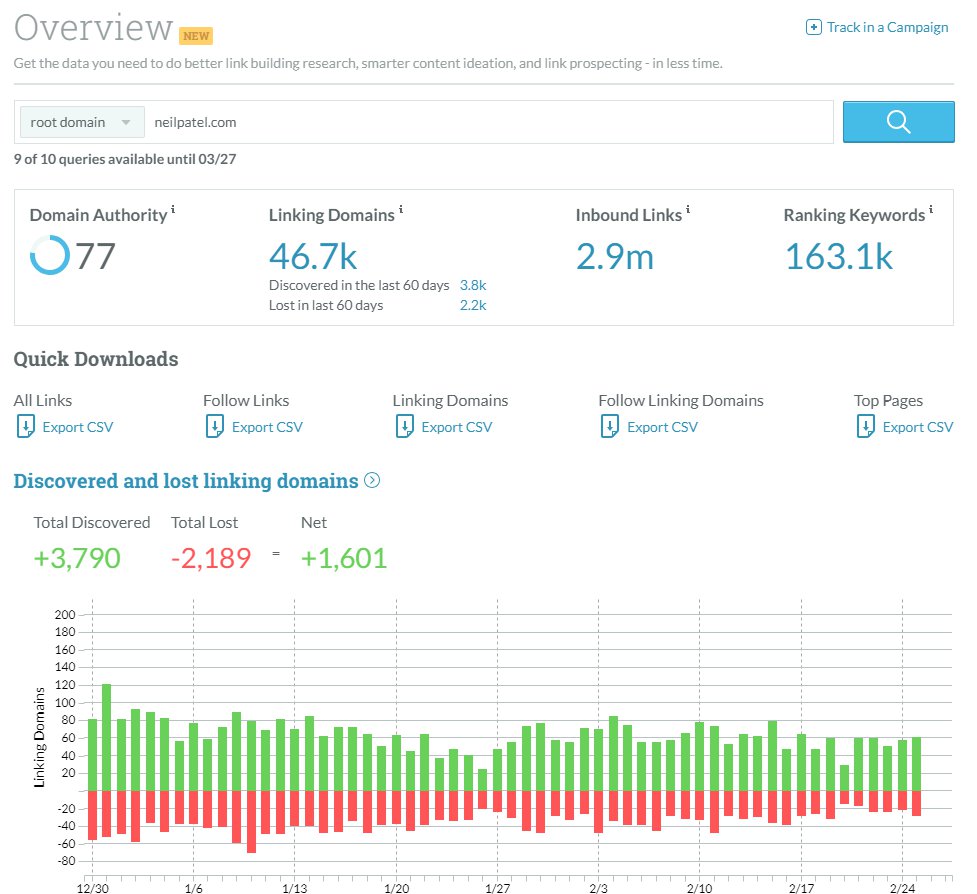

Inbound links – or backlinks

Inbound links or backlinks are a critical component of any SEO campaign. Backlinks are the internets way of giving credibility to your website – which is called Domain Authority. Although the algorithms used for determining Domain Authority can be complex, auditing for backlinks is easy. There are a ton of great free SEO tools you can use in your audit, one of my favorites is the MOZ Site Explorer :

ProTip: Look up the top competitors and run a similar backlink audit to identify which backlinks they are getting and uncover link building opportunities.

Learn more about link building strategies here

Social Signals

Social Signals are the collective engagement metrics (shares, likes, comments etc) for a particular post. Search Engines take social media engagement into consideration which is why it’s important to audit for social signals even if you’re not running a full-fledged Social Media campaign. Your audit should uncover which social media accounts are currently set up and identify engagement metrics for each.

Don’t think Social Media is right for your business? You might not be getting leads through your social media, but search engines are still watching. Check out the case studies mentioned in this blog to learn more.

Conclusion

Since Search Engine algorithms are complex, there are no silver bullets when it comes to getting your website ranked for keywords. Your website will rank and rank well if you follow these rules and focus on generating high quality, relevant and valuable content. If you have any questions or comments I am happy to assist in any way possible. I hope you’ve enjoyed this article and I look forward to developing others in the hopes of helping my fellow marketers.

Have a great day, good luck, God speed!

Learn more about blacklists

Learn more about blacklists